Agentic AI vs. Traditional Chatbots: What Fintech Companies Need to Know

You've tried chatbots. Most fintech teams have.

You deployed one with real optimism 24/7 support, faster responses, reduced load on your human team. The demo looked great.

Then it went live.

Users hit scripted loops and couldn't escape. KYC queries got generic responses. Compliance conversations had no audit trail. Onboarding broke the moment anything unusual came up. Support tickets didn't drop they just changed shape. Instead of "I need help," you started seeing "your chatbot is useless."

This isn't a vendor problem. It's an architecture problem. Traditional chatbots were never built for what fintech actually needs.

Agentic AI is not a better chatbot. It's a fundamentally different category built for the compliance complexity, multi-step journeys, and contextual depth that fintech demands.

Here's what you need to know to tell them apart.

What Each Actually Is

Traditional Chatbots: Rule-Based, Scripted, Reactive

A traditional chatbot is a decision tree with a conversational skin. It matches user inputs to predefined rules and returns a scripted response. If the user says something anticipated it works. If they don't it breaks.

Chatbots are reactive. They respond. They don't initiate, don't adapt, and have no memory not within a session, not across sessions. Every conversation starts from zero.

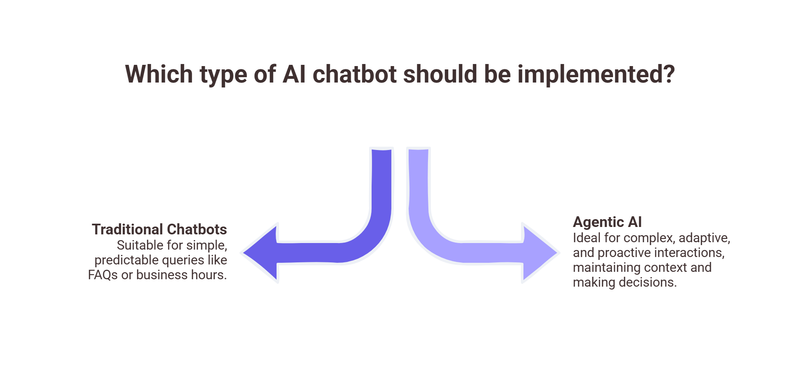

The underlying logic: IF user says X, THEN respond with Y. Useful for simple, predictable queries like FAQs or business hours. Not useful for anything requiring judgment, context, or adaptability.

In fintech, almost everything requires judgment, context, or adaptability.

Agentic AI: Autonomous, Adaptive, Proactive, Context-Aware

An AI agent is built on large language models combined with a goal-oriented execution layer. It doesn't match keywords to scripts it understands intent, maintains context across entire journeys, makes decisions based on evolving information, and takes actions.

The key word is agentic. An agent has goals and works toward them. A user who starts onboarding, pauses for three days, and returns the agent knows where they were, what failed, and what comes next. It doesn't start over. It picks up.

Agentic AI is also proactive. It initiates based on triggers — a user who hit an upload error and hasn't returned, a lead who browsed a product page twice without converting. It doesn't wait to be asked.

Critically for fintech: it operates within guardrails. You define what it can do, what it must escalate, what it must log. This is what makes it viable for regulated environments where chatbots couldn't go.

10 Criteria That Matter in Fintech

Each row maps to a failure mode fintech companies experience with chatbots daily. The question isn't which looks best on a slide it's which rows are currently costing you customers.

Where Chatbots Fail in Fintech — Specifically

a) KYC Conversations Require Adaptability

KYC is not a linear process. Users arrive with different documents, different literacy levels, different problems. A user whose Aadhaar address doesn't match their current address needs a different conversation than someone whose PAN card image is blurry.

A chatbot has one script for KYC. When reality diverges which it does for a significant portion of users the conversation breaks. The user gets a non-answer and leaves.

EY's Global FinTech Adoption Index found that unclear onboarding instructions rank among the top three reasons for digital financial product abandonment in emerging markets.

The chatbot doesn't just fail the interaction it fails the user's trust in digital finance broadly.

b) Compliance Requires Guardrails and Audit Trails

Every customer interaction touching account status, KYC, or investment suitability carries regulatory weight. What was said, when, and by whom must be defensible.

Traditional chatbots have no audit trail that satisfies regulatory review. They can't apply dynamic guardrails blocking certain responses unless suitability assessment is complete, for instance. Regulators in India, the UK, and the EU are increasingly scrutinising AI use in financial services. A chatbot that can't explain what it said is a compliance exposure, not just a UX problem.

c) Onboarding Requires Multi-Step Journey Memory

Fintech onboarding is not a single-session event. Users start on their commute, get interrupted, return on a different device days later, hit an upload error, and pause again.

A chatbot treats every return as a new user. They re-establish context, re-explain their situation, re-upload documents. For already-hesitant users, that's the final reason to quit. Multi-step journey memory isn't a nice-to-have it's the minimum requirement for a non-linear, multi-session process. Chatbots don't have it. Agents do.

d) Lead Nurturing Requires Personalisation

A user who visits your mutual fund platform twice, spends time on the SIP calculator, and reads the tax-saving funds page is signalling intent. A chatbot greets them: "Hi! How can I help you today?" An AI agent says: "You were looking at ELSS funds last time want to know how much you could save on taxes with a ₹1.5 lakh SIP?"

Financial products are considered purchases. Generic scripted engagement fails consistently at converting hesitant users. Contextual, personalised engagement converts. The gap between those two interactions is not marginal it's the difference between a user bouncing and a user investing.

Evaluation Framework — 5 Signals You Need to Upgrade

Signal 1: Drop-off clusters at the same steps. Consistent abandonment at document upload or onboarding stages despite UI changes means the problem is lack of adaptive guidance not design. A chatbot can't fix this.

Signal 2: Support tickets are about the chatbot itself. When users contact humans to escape your automated system, your automation is net-negative. This is the clearest signal your architecture is wrong.

Signal 3: You serve users in multiple languages. If your growth market includes non-English speakers and your system delivers pre-translated static scripts, you're losing completion rates across every non-metro geography you operate in.

Signal 4: Your compliance team is uncomfortable. If legal has flagged that the chatbot says things it shouldn't, can't produce conversation logs, or has no escalation path for sensitive queries you have an active regulatory exposure, not a hypothetical one.

Signal 5: You have no cross-session memory. If a user who started onboarding three days ago is treated as new today, your system has a structural failure that compounds every other problem in your funnel.

Three or more of these signals? You're not running an AI customer experience. You're running a script with a chat window.

The Bottom Line

If your chatbot can't remember where a user left off, can't explain a KYC error in the user's language, can't log a compliance-sensitive conversation, can't escalate with context, and can't personalise beyond a name field it's not an AI strategy.

Agentic AI was built for exactly the environment fintech operates in: regulated, complex, multi-step, multilingual, and high-stakes. It doesn't just answer questions. It guides journeys, resolves failures, adapts to users, and operates within compliance guardrails.

The fintech companies pulling ahead stopped asking their chatbot to do a job it was never designed for.

Ready to see what an AI agent looks like in a fintech context? See Zigment's AI agents in action.