Breaking Data Silos: The Case for an AI Orchestration Layer

Your nonprofit isn't short on data. It's short on connected data.

Donor records live in the CRM. Beneficiary intake sits in Google Forms. Program outcomes are buried in spreadsheets. Volunteer logs exist in a separate system entirely.

Five systems. One person. Zero unified view.

Nonprofits don't suffer from a lack of data they suffer from a lack of usable data. Insights are stuck behind passwords, exports, and manual reporting bottlenecks.

The instinct is to fix this by replacing everything. One platform. One migration. One clean slate.

That instinct is expensive. And it rarely works.

There is a smarter path. When data lives in disconnected systems, it becomes a barrier instead of a strength. AI orchestration layer doesn't replace your legacy tools it layers intelligence above them. Connecting everything. Finally.

The High Cost of the "Big Bang" Migration

A beneficiary walks into your program.

They filled out a Google Form at intake. They attended three workshops tracked in a spreadsheet. They received donor-funded support logged in your CRM.

Three systems. Three records. No connection.

Your program team doesn't know the full story. Your funders can't see the full impact. And the person you're trying to serve is being failed quietly, structurally, invisibly.

This is the information silo problem. And it is endemic to the nonprofit sector.

The average non profit collects data across 5 to 8 disconnected tools Google Forms for intake, SurveyMonkey for feedback, Excel for attendance, a CRM for donor data, and email for qualitative stories. Without unique participant IDs connecting these systems, staff spend 80% of their data time on cleanup, deduplication, and manual matching. (Sopact, 2025)

That is not a workflow problem. That is a structural failure.

And the instinctive response "let's migrate to one unified platform" is expensive, risky, and rarely works.

A new technology solution alone is not the answer.

The real work is aligning technology, process, and people. While organizations could theoretically string together disparate systems through APIs or ETLs, there is likely a better approach one that creates a data platform that key systems can connect to, enabling staff to answer questions that improve constituent experiences, evaluate impact, and forecast outcomes more accurately. (Build Consulting, November 2025)

The "Big Bang" migration doesn't solve the data integration challenges. It relocates them. At enormous cost. With enormous risk.

Gartner predicts that over 40% of agentic AI projects will fail by 2027 because legacy systems can't support modern AI execution demands lacking real-time execution capability, modern APIs, modular architectures, and secure identity management needed for true agentic integration. (Deloitte Insights, December 2025)

The problem was never the platform. The problem is the absence of a coordinating intelligence layer sitting above them all.

Defining the AI Orchestration Layer

Stop thinking about replacement.

Start thinking about layering.

For years, the mantra of technology modernization revolved around migration. This journey, while essential, has often felt like an arduous endurance race fraught with technical debt, budget overruns, and the constant struggle to shift focus from merely keeping the lights on to genuine innovation. (NTT DATA, February 2026)

There is a different path.

A marketing orchestration platform in the nonprofit context, a constituent orchestration layer doesn't replace your siloed systems. It sits above them. It reads every system simultaneously. It resolves conflicting records. It maintains state across every interaction. And it coordinates the next best action without requiring a single legacy database to be rebuilt.

Most organizations today aren't just experimenting with a single AI model they're working across multiple LLMs, legacy systems, and newer AI agents simultaneously. Without orchestration, that ecosystem risks quickly becoming fragmented, redundant, and inefficient. AI orchestration is quickly becoming the critical link between data strategy and AI execution.

Beth Scagnoli, VP Product Management, Redpoint Global (CIO Magazine, July 2025)

For a nonprofit, this is mission-critical language.

Your Salesforce Nonprofit Cloud holds donor history. Your program database holds service records. Your email platform holds engagement signals. Your intake forms hold beneficiary needs data.

None of these were designed to talk to each other.

The data orchestration layer is the connective intelligence that makes them act as one without touching production systems, without budget-breaking migrations, without a six-month implementation cycle.

Instead of ripping and replacing legacy systems, organizations can layer AI on top through incremental modernization retaining core functionality while adding new AI-powered workflows and interfaces. (Dualboot Partners, October 2025)

This is the smarter move. The safer move. And for resource-constrained nonprofits, the only realistic move.

Building the Marketing Memory Bank

Here is where the architecture becomes genuinely powerful.

The orchestration layer doesn't just pass data. It remembers.

It builds what can be called the Marketing Memory Bank or in mission-driven language, a Constituent Intelligence Layer. A living, continuously-updating unified customer profile that merges every signal behavioral, transactional, qualitative, longitudinal into one query-ready timeline per person.

When demographic information lives in your CRM, survey responses sit in Google Forms, and program participation data exists in spreadsheets, you cannot connect information about the same person across these sources. Every analysis begins with manual export-merge-deduplicate cycles. (Sopact, 2025)

This is the Single Customer View problem except in a nonprofit, it is a Single Constituent View problem. And the cost of not having it is not a missed revenue quarter. It is a missed intervention. A beneficiary who slipped through. A donor who lapsed because nobody noticed the engagement signals dropping.

Real-time constituent profiles built by connecting CRM data with email engagement, donation history, event attendance, and program impact — enable organizations to move beyond static lists and engage supporters based on real-time behavior and preferences. (Redpath Consulting, October 2025)

The Marketing Memory Bank makes this technically real.

It ingests qualitative signals session behavior, survey sentiment, conversation tone. It merges them with quantitative signals donation frequency, program attendance, support request history. It maintains temporal ordering so the sequence of interactions is preserved, not just the data points. And it makes the resulting profile queryable by any downstream system, agent, or team member in real time.

Your grant report no longer requires a six-week data preparation cycle.

Your program coordinator knows a beneficiary's full history before the next session.

Your development team sees which donors are showing re-engagement signals before they lapse permanently.

This is customer data management operating at the intelligence layer. Not the database layer.

Overcoming Roadblocks: How Orchestration Tools Work

Let's get technical.

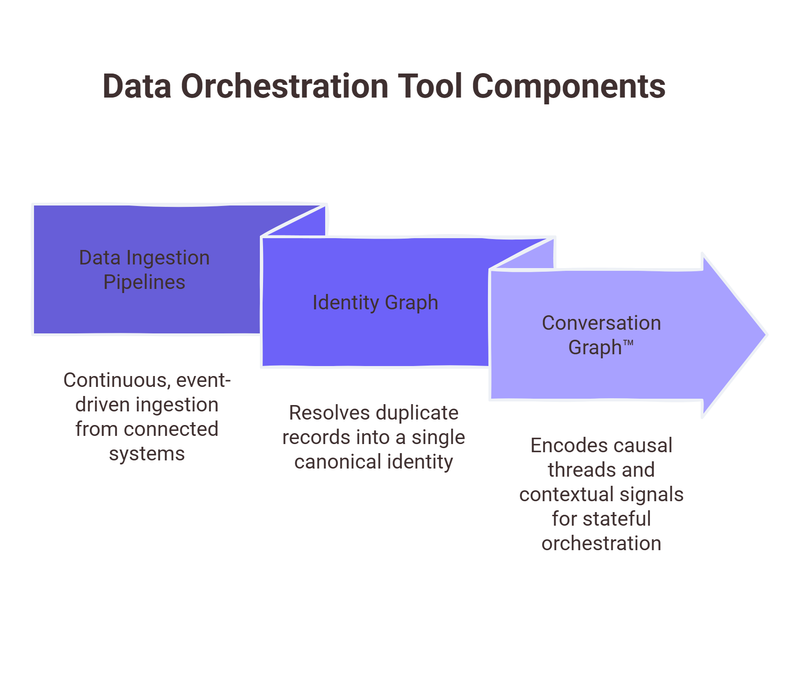

Modern data orchestration tools deployed as an AI layer above legacy nonprofit stacks operate across three interconnected technical components.

Component 1: Data Ingestion Pipelines.

Continuous, event-driven ingestion from every connected system. Form submissions. CRM field updates. Email open events. Program attendance records. Volunteer log entries. All normalized into a canonical event schema in real time. No batch exports. No manual merges. Schema validation at ingestion point prevents downstream data corruption before it propagates.

Component 2: Identity Graph.

This is the technical heart of the silo-breaking operation.

Without a unified view, nonprofits struggle to demonstrate impact effectively to funders, make informed decisions based on comprehensive insights, track progress accurately, and build strong stakeholder relationships. (Sopact, 2025)

The identity graph resolves why that happens at the data level. The same beneficiary exists as multiple records different email addresses across intake systems, name variations across program databases, duplicate entries created by different staff members over time. The identity graph resolves these records probabilistically and deterministically scoring entity similarity across multiple matching vectors simultaneously: email domain, phone number, address normalization, behavioral fingerprint, temporal co-occurrence patterns. Output: one canonical identity. One record every downstream system references. Zero duplicates.

Component 3: Conversation Graph™.

This is the layer that makes orchestration stateful and it is what separates true orchestration from middleware.

The Conversation Graph™ doesn't just log events. It encodes the causal thread what preceded each interaction, what followed it, and what contextual signals surrounded it. A beneficiary who attended two workshops, then stopped responding to outreach, then re-engaged after a peer referral that narrative sequence carries critical signal. It is invisible to siloed systems. The Conversation Graph™ captures it and makes it actionable.

Current enterprise data architectures, built around ETL processes and data warehouses, create friction for agent deployment. The fundamental issue is that most organizational data isn't positioned to be consumed by agents that need to understand business context and make decisions. (Deloitte Insights, December 2025)

The Conversation Graph™ solves this. It restructures data from a collection of discrete records into a context-aware, temporally ordered intelligence object one that an AI agent can reason across in real time.

By 2028, 70% of organizations building multi-LLM applications and AI agents will use integration platforms to optimize and orchestrate connectivity and data access up from less than 5% in 2024. (Gartner, via CIO Magazine, 2025)

The organizations building this infrastructure now are not early adopters. They are the organizations that will still be operationally coherent in three years.

And those building it are winning. Companies with strong integration achieve 10.3x ROI from AI initiatives versus just 3.7x for those with poor connectivity. (MuleSoft, 2025)

That's not a marginal gain. That's a structural advantage.

Zigment: The Agentic Brain for Your Legacy Stack

This is where Zigment operates.

Not as another platform to migrate to. As the Agentic AI layer for your marketing stack a stateful orchestration brain that sits on top of HubSpot, Salesforce, custom CRMs, legacy databases, and everything in between.

Zigment connects the systems you already have. Resolves the identities scattered across them. Builds the unified customer profile your teams have always needed. And then autonomously drives the revenue-focused autonomous actions that matter.

High-intent account detected?

Triggered. Right sequence, right moment, right rep automatically.

Customer showing churn signals across three systems at once? Escalated. With full context. Before it's too late.

Sales handoff happening?

The rep receives the complete Conversation Graph™. Not a CRM summary. The real story.

Agentic AI has moved into the center of the marketing stack taking responsibility for entire workflows, such as building and routing campaigns, sequencing actions, and adjusting performance levers without waiting for someone to manually intervene. (Demand Gen Report, December 2025)

74% of executives report achieving ROI from AI agents within the first year. Among those reporting productivity gains, 39% have seen productivity at least double. (Google Cloud ROI of AI Report, 2025)

The gap between organizations that orchestrate and those that don't is already measurable. It is widening fast.