Why Most AI Assistants Fail After the First Conversation

Quick quiz. You've interacted with a brand's AI assistant. Had a genuinely useful conversation. Explained your situation, your budget, maybe even a specific preference.

Then came back two days later on a different channel. What happened?

If you're like most people, the AI greeted you like a complete stranger. "Hi there! How can I help you today?" You started over. You re-explained everything. And somewhere in the background, a customer who was this close to converting quietly decided it wasn't worth the effort.

This is the hallucination problem nobody talks about. Not the flashy kind where an AI confidently invents fake citations or tells you a product exists when it doesn't (though that happens too). The subtler, more commercially damaging kind: where an AI system simply has no memory of what just happened. It's not lying. It just doesn't know. It was never given the tools to know.

Fixing that is what retrieval-augmented AI is all about. And in 2026, it's no longer an experimental concept. It's the foundational architecture separating AI that converts from AI that frustrates.

The Goldfish Problem in Enterprise AI

Here's a useful mental model. Most AI systems including many that are genuinely impressive at one-off tasks operate like goldfish. Every conversation is a fresh bowl of water.

The training data provides general intelligence. But the specific, proprietary, real-time knowledge that makes a response actually useful to your customer? Gone. Washed away between sessions, between channels, between the WhatsApp thread and the web chat.

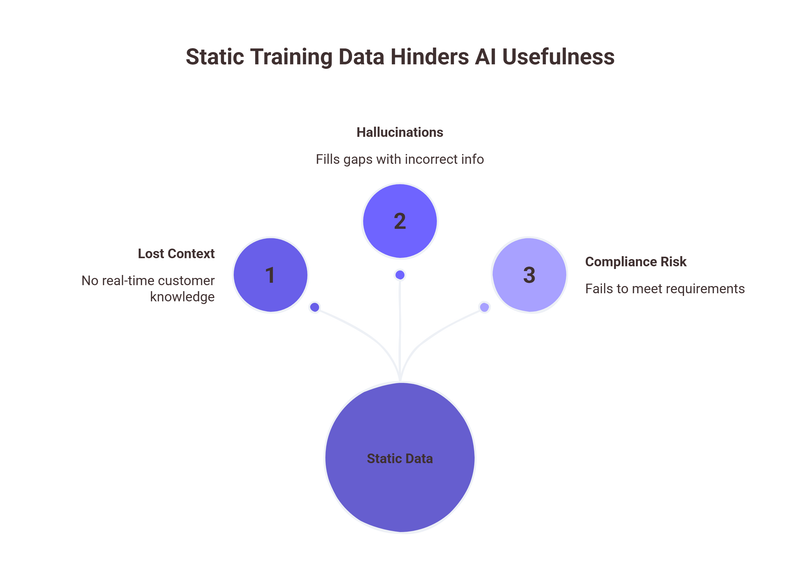

The technical term for why this happens is "static training data." A large language model learns from a massive corpus of information up to a certain point. Then that knowledge freezes. It knows a lot about the world in general. It knows nothing about the lead who filled your form on Sunday, expressed anxiety about pricing on Instagram Monday, and is now asking a very specific question about your cancellation policy on your website Tuesday.

Standard generative AI generates answers based on whatever it "memorized" during training. It cannot naturally access real-time updates, live CRM data, or active conversation histories. And when it doesn't know something?

It often doesn't say "I don't know." It fills the gap. That's where hallucinations come from: not malice, just the model doing its best with incomplete information.

Retrieval-augmented models can reduce hallucination rates by up to 50% compared to standalone LLMs. Which, if you're running a customer-facing AI in healthcare, financial services, or education is not a nice-to-have. It's a compliance requirement.

What RAG Actually Is (Without the Jargon)

Retrieval-Augmented Generation RAG, as the industry calls it sounds technical. But the concept is elegant.

Instead of asking an AI to answer from memory alone, you give it access to a live, curated knowledge store. Before generating any response, the system retrieves the most relevant context from your CRM, your conversation history, your product database, your customer's behaviour signals and uses that real information to inform what it says.

Think of it as "open book" answering. The model reads before it writes. The AI isn't guessing based on generalized training. It's reading the actual file before it speaks.

The practical result is enormous. RAG systems integrate up-to-date information from data sources without the need for retraining. Your AI agent doesn't need to be retrained every time your pricing changes, your policy updates, or a customer's situation evolves. You update the knowledge store. The AI retrieves from it. The response is accurate, specific, and grounded.

That's not just technically clever. It's commercially transformative.

The Market Knows This. Do You?

The enterprise world has moved fast. The RAG market was estimated at $1.94 billion in 2025. It's projected to reach $9.86 billion by 2030 — growing at a 38.4% CAGR. And that's one of the more conservative estimates.

Why is investment piling in? Because the problem it solves is real, measurable, and expensive. Enterprises are now choosing retrieval-augmented generation for 30–60% of their AI use cases — particularly wherever accuracy, transparency, and proprietary data are non-negotiable.

The developer community has reached a consensus too. 80% of enterprise software developers believe RAG is the most effective way to ground LLMs in factual data. That's not a marginal opinion. That's an industry-wide verdict.

For CTOs and data architects, the shift from model-centric to data-centric AI is one of the defining transformations of the decade. The question is no longer whether to build retrieval-augmented systems. It's whether you're doing it well enough to actually use them on your customers without embarrassing yourself.

Where It Breaks Down (And Where Zigment Comes In)

Here's the gap that most RAG implementations even good ones still leave open. They're very good at retrieving from documents. Product manuals. Policy PDFs. FAQ databases. Ask the AI a question, it searches the doc store, it answers accurately. Excellent.

But customer-facing AI in high-touch industries isn't just about documents. It's about conversations. It's about the mood of a WhatsApp exchange three days ago. The moment a lead asked "what happens if I need to pause my subscription" which is, if you know how to read it, a clear buying signal wrapped in anxiety. It's about the qualitative texture of a customer's journey: not just what they asked, but why, and when, and in what emotional state.

Most RAG systems retrieve facts. They don't retrieve context. And in a revenue-generating conversation, context is everything.

This is the gap Zigment's architecture is specifically built to close. Rather than indexing static documents alone, Zigment's Conversation Graph™ builds a continuously updated, queryable map of every signal, event, and intent marker across a customer's entire journey across channels, across sessions, across time. It functions as a Marketing Memory Bank. A living knowledge store that the AI retrieves from before every response. Not just the first one.

This is the difference between a retrieval-augmented system that handles information queries and one that handles relationship continuity. Between AI that answers questions and AI that executes revenue-focused autonomous actions qualifying leads, routing to specialists, booking appointments, nudging at the right moment while never losing the thread of the conversation already in progress.

The Practical Upshot

If your AI strategy is built on a static LLM that fires responses from training data alone, you have a ceiling. It will hallucinate. It will forget. It will feel generic to customers who expected to be remembered. And in a high-touch industry EdTech enrolment, gym memberships, BFSI onboarding, healthcare intake generic is expensive.

Implementing RAG reduces the cost of fine-tuning LLMs by up to 80% for domain-specific tasks. Responses are grounded, accurate, and auditable. You're not rebuilding your model every time something changes. You're updating your knowledge store and trusting that your retrieval layer will find the right context at the right moment.

The goldfish problem is solvable. What it requires isn't more computing power or a bigger model. It requires a retrieval architecture that treats your customer's journey as a living document not a series of isolated, forgettable interactions.

That's what RAG does at the architecture level. That's what the Conversation Graph™ does at the revenue level.

Your customers remember everything. Your AI should too!!