Why Most Fintech Chatbots Fail-(And What to Use Instead)

The chatbot kept sending me to the FAQ. I just wanted to know why my transfer failed.

That Reddit comment from a frustrated NeoBank user got over 2,000 upvotes. And honestly same. Most of us have been there.

You're staring at your phone at 11 PM, a payment has failed, and your bank's chatbot cheerfully asks: 'Can I help you with account balance, bill pay, or card services?' You type 'NONE OF THESE.' It offers you the FAQ.

This isn't a small annoyance. It's a business problem measured in churned customers, spiking support tickets, and brand trust quietly eroding with every dead-end loop.

So what's actually going wrong and why does it keep happening even as companies pump money into 'AI-powered' support tools? Let's dig in.

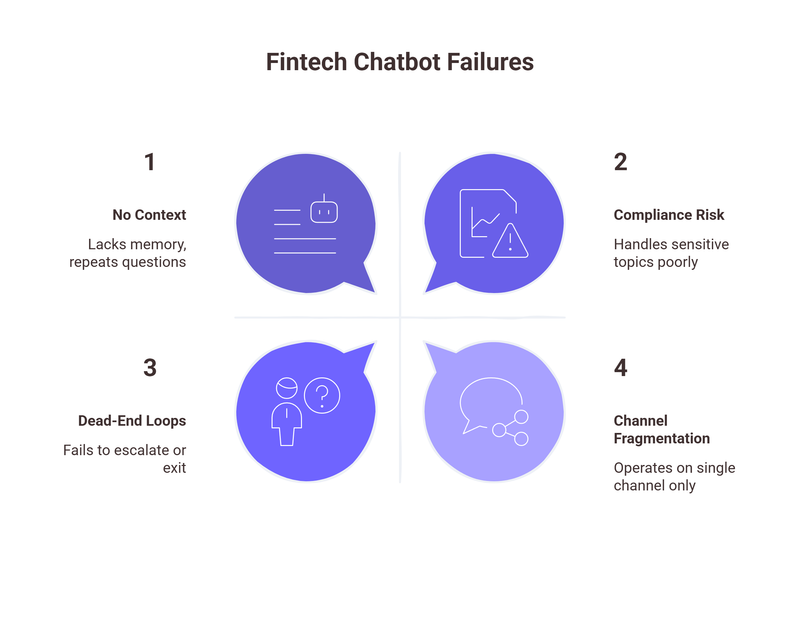

The 5 Reasons Fintech Chatbots Keep Failing

Here's the uncomfortable truth: most fintech chatbots aren't really AI. They're glorified decision trees dressed up in a chat window. And the problems they create fall into five very predictable categories.

1. Script-based, with zero context memory.

Imagine calling your bank, explaining your problem in detail, getting transferred, and then having to repeat every single word from scratch. Infuriating, right?

That's exactly what traditional chatbots do every single message. There's no memory of what you said two exchanges ago. The bot doesn't know you've been trying to resolve the same issue for three days. Every conversation starts at square one, and customers bear the cognitive load of repeating themselves indefinitely.

2. They can't handle compliance-sensitive questions.

Banking and fintech are uniquely regulated industries. A customer asking 'Why was my loan application rejected?' or 'How is my credit score being used?' isn't just making casual conversation these are questions with serious regulatory implications.

Traditional chatbots either dodge them entirely ('Please speak to an advisor'), give dangerously vague answers, or worse, provide incorrect information that creates liability exposure. The bot simply isn't built for the nuanced, legally sensitive terrain of financial services.

3. No escalation path just dead-end loops.

This is the one that really drives people to Reddit. When a chatbot can't answer something, it should hand off seamlessly to a human. Instead, what most users experience is: FAQ link → another FAQ link → 'I didn't understand that' → FAQ link again. There's no acknowledgment that the issue is genuinely complex, no offer to escalate, and no graceful exit.

A Salesforce study found that 60% of customers say being stuck in automated systems that can't help them is their top frustration with customer service. In fintech, where money is involved, this frustration turns into action like switching providers.

4. Single-channel only.

Your customer starts a conversation on your app during their commute, then switches to their laptop when they get home, then follows up via email. A traditional chatbot has no idea any of this happened.

It only knows about the one channel it lives on, which means customers are forced to re-explain everything every time they switch touchpoints. In a world where omnichannel is table stakes, this kind of fragmentation feels prehistoric.

5. No learning over time.

Traditional chatbots don't get better unless someone manually updates their scripts. That means every misunderstanding, every failed response, every customer who rage-quit the conversation none of that feeds back into improvement. The bot makes the same mistakes in March that it made in January. Rinse, repeat, lose customer.

The Real Cost of Chatbot Failure

Let's talk numbers, because this isn't just a UX problem it's a revenue problem.

According to a 2023 JD Power study on banking customer satisfaction, 13% of banking customers say they are actively considering switching providers and poor digital support experiences are consistently cited among the top three reasons.

That's not a rounding error. For a mid-sized neobank with 500,000 customers, that's potentially 65,000 people one bad chatbot interaction away from walking out the door.

Then there's the internal cost. Forrester Research has reported that unresolved chatbot interactions frequently 'boomerang' into phone or email support, often requiring more agent time than if the customer had simply called in the first place. When a bot fails, the ticket doesn't disappear it just becomes more expensive.

And the brand damage?

That's harder to quantify but arguably more dangerous. Social media posts about chatbot failures spread fast, especially in fintech communities where trust is the entire product. One viral Reddit thread about your bot sending customers in circles can undo months of brand-building. People don't just leave quietly they tell others.

The bottom line: chatbot failure isn't a 'nice to fix' problem. It's a competitive liability.

What's Different About Agentic AI

Here's where things get genuinely interesting. The technology has caught up with the promise but most companies are still deploying yesterday's tools.

Agentic AI isn't just a better chatbot. It's a fundamentally different paradigm. Where a traditional chatbot executes scripts, an agentic AI reasons. It understands intent, not just keywords. It remembers, adapts, and critically knows when to act versus when to ask a human.

Let's break down what makes agentic AI actually different in a fintech context:

• Autonomous and context-aware: An agentic AI understands that 'my transfer didn't go through' and 'why is there a pending deduction?' might be the same underlying issue. It connects dots across the conversation and across previous conversations too.

• Compliance-trained: Unlike generic chatbots, agentic AI built for financial services can be trained on regulatory frameworks GDPR, CCPA, RBI guidelines, FCA rules. It knows which questions require disclosure, which require escalation, and which can be answered directly and confidently.

• Built-in escalation intelligence: When an agentic AI hits the edge of its capability or detects high emotional stakes (an angry customer, a large disputed transaction), it doesn't dump the user back at the FAQ. It escalates with context handing off a full summary to a human agent so the customer doesn't have to repeat themselves.

• Omnichannel by design: The conversation follows the customer, not the platform. Whether they're on your app, web portal, WhatsApp, or email, the context persists. It's one continuous relationship, not a series of disconnected interactions.

• Continuously learning: Every conversation, successful or not feeds back into the model. The AI that's handling your customers in December is measurably smarter than the one in January, without anyone having to manually update a script tree.

The net effect? Resolution rates that traditional chatbots can't touch. Customers who feel genuinely heard. Support costs that go down instead of up. And a brand experience that builds trust instead of eroding it.

Traditional Chatbot vs. Agentic AI Side by Side

Here's the comparison across eight criteria that actually matter for fintech customer experience:

The gap isn't marginal it's generational. These are fundamentally different tools solving fundamentally different problems, and treating them as equivalent is why so many fintech companies are still fighting the same CX battles year after year.

Making the Switch, What to Look For?

If you've recognized your chatbot in sections 1 and 2 above, you're probably already thinking about alternatives. Here's what to look for when evaluating agentic AI for financial services and what separates genuinely capable platforms from polished chatbots with better marketing.

Financial domain training: Has the AI been trained on, or can it be fine-tuned for, financial services terminology and compliance requirements? A generic AI assistant built for e-commerce doesn't understand the regulatory nuance of a loan rejection query. Ask vendors for specific examples of compliance-sensitive handling.

Memory and context persistence: Can the system maintain context across sessions, not just within a single conversation? Ask for a demo that spans multiple touchpoints if the AI can't remember what was discussed 48 hours ago, it's not truly agentic.

Escalation with context: When the AI hands off to a human, what does that handoff look like? The gold standard is a complete conversation summary pushed to the agent's interface before they even say hello. Anything less and you're back to customers repeating themselves.

Omnichannel architecture: This should be infrastructure-level, not a feature bolted on. Ask how the platform handles a customer who starts on web chat and follows up via email 12 hours later. If the answer involves starting fresh, keep looking.

Learning and improvement loops: How does the system improve over time? What are the feedback mechanisms? Who reviews edge cases? A platform that can't show you a clear model improvement roadmap is likely just a chatbot with a better interface.

Integration depth: Can it connect to your core banking system, CRM, and transaction history in real time? An AI that can't actually look up your customer's account details in the moment it needs them is just performing understanding, not delivering it.

The evaluation process matters. Don't just watch a polished demo run a pilot with real edge cases from your support ticket backlog. The questions your bot currently fails are exactly the ones that will reveal whether an agentic AI is worth the investment.

The Chatbot Era Is Ending. The Agent Era Has Begun.

The Reddit thread that opened this article isn't an outlier. It's a signal that millions of fintech customers are sending every day through their support tickets, their churn decisions, and their public frustration. They're not asking for perfection. They're asking to be understood.

Traditional chatbots were built for a world of simple queries and linear flows. Fintech customer support with its compliance complexity, emotional stakes, and cross-channel reality demands something categorically more capable.

The companies that figure this out first won't just have better CSAT scores. They'll have a genuine competitive moat: customers who trust them more, stay longer, and tell their friends.

The technology exists. The question is whether you're ready to actually use it.